By Dr. Steve Kim, Valer Co-Founder & Chief Medical Officer

Everyone in healthcare IT is talking about agentic AI. And for prior authorization specifically, the promise is genuinely compelling: autonomous systems that run the entire workflow end to end, without staff manually jumping between portals. There’s no status chasing, no copy-pasting, and no manually jumping between systems. Exceptions get flagged, and everything else moves on its own.

We think that future is achievable. We also think most of the implementations you’ll hear about over the next few years are going to fall short — and not because the technology isn’t ready. Because the foundation wasn’t.

The problem was never the complexity of the transaction

Prior authorization costs the industry $31 billion a year in provider resources and 868 million staff hours annually. That number isn’t a reflection of how complicated the underlying workflow is. The transaction itself is straightforward: a provider submits clinical criteria, a payer returns a decision, and that decision gets recorded in the EHR. The cost exists because the infrastructure to support that exchange reliably has never been built. Building it means accounting for hundreds of payers, dozens of EHR configurations, and thousands of service lines all at once.

That’s the opening agentic AI creates. Unlike traditional RPA, which follows rigid scripts that break the moment a payer updates their portal, agentic systems can reason, adapt, and recover. They can ingest real-time payer policy feeds, navigate dynamic interfaces, and orchestrate multi-step workflows across EHRs, payer endpoints, and clearinghouses without hard-coded rules. The potential outcome is real end-to-end automation, from submission to decision to EHR sync, with staff intervening only when something genuinely requires their judgment.

Early implementations are showing real results. The question is whether your organization has built what’s needed to get there.

95% of enterprise AI pilots fail. Here’s why prior auth is especially hard.

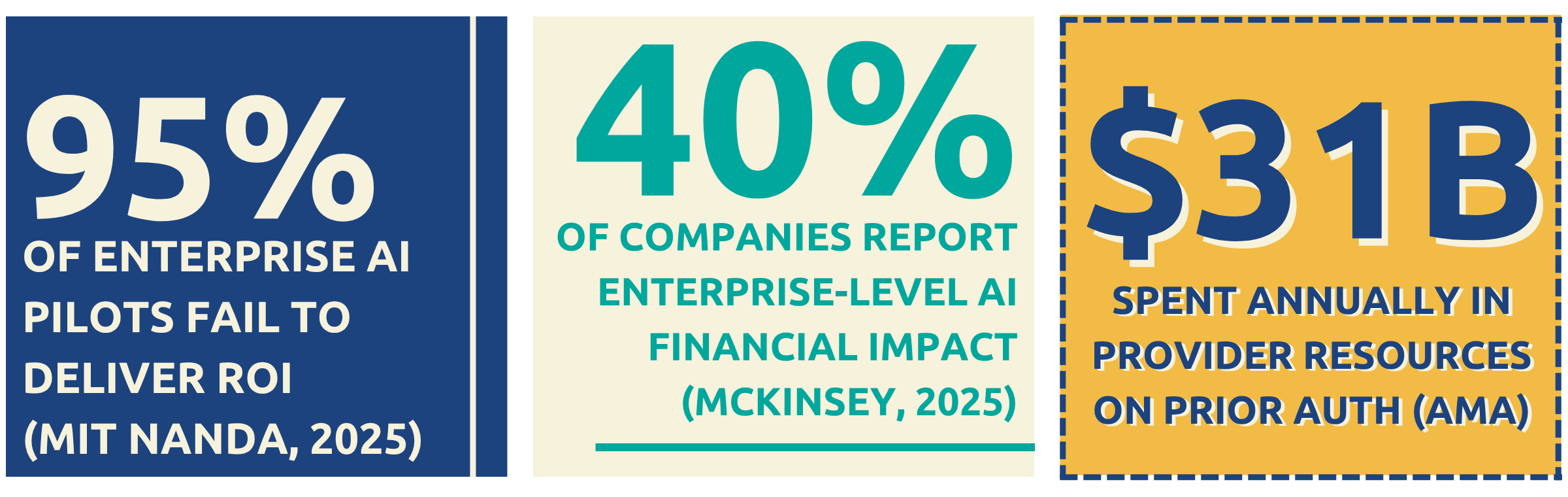

MIT’s 2025 NANDA report found that 95% of enterprise AI pilots fail to deliver measurable ROI. McKinsey’s 2025 research, across more than 50 agentic AI deployments, found that only 40% of companies report enterprise-level financial impact, and nearly two-thirds haven’t moved beyond pilot. Some are actively rehiring humans in roles where agents failed.

We aren’t surprised. Prior authorization sits at the intersection of several of healthcare’s most stubborn infrastructure challenges, and each one is a potential failure point:

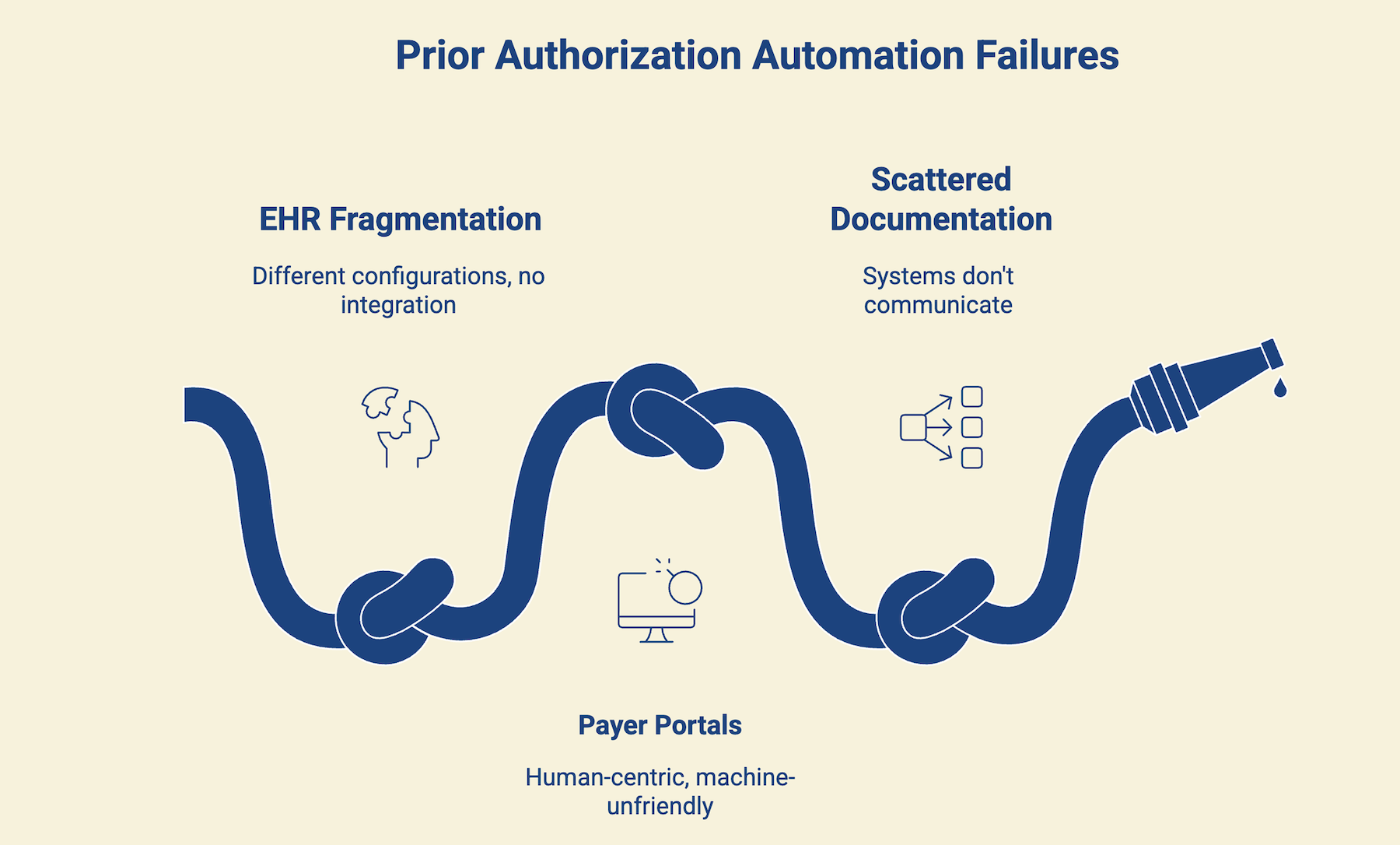

- EHR fragmentation doesn’t shrink at scale, it compounds.

Epic, Oracle Health, Meditech, athenahealth, and Cerner each have distinct data models, API maturity levels, and prior auth workflows. More than that, every health system’s configuration reflects years of implementation decisions made by different teams across different specialties and sites. An agent trained on one environment can be completely unprepared for another. The variability doesn’t go away as you expand. It multiplies.

- Payer portals weren’t designed for machines.

Most payer portals are built for humans. Dynamic fields, session timeouts, inconsistent layouts, and business rules that change without notice. The moment a portal updates its interface or changes a field label, automation breaks. This isn’t a solved problem, it’s an ongoing maintenance challenge that most vendors underestimate until they’re in production.

- Clinical documentation is fragmented and unstructured.

Prior auth decisions increasingly require clinical evidence that doesn’t live in one place. It’s distributed across structured EHR fields, scanned documents, dictated notes, and records from systems that don’t communicate with each other. Assembling that documentation accurately and formatting it to match each payer’s specific requirements is one of the hardest integration problems in healthcare. Most demos solve the clean-data version of this. Production environments rarely are.

McKinsey found that high-performing AI organizations are 2.8x more likely to have fundamentally redesigned their workflows before deploying AI — and nearly 3x more likely to have defined human-in-the-loop validation processes. They didn’t optimize for automation rate. They optimized for trustworthy automation.

Getting to scale requires work in three areas

The path isn’t a mystery. But it does require deliberate sequencing.

First, standardize your EHR workflows before you automate them. Before an agent can reliably extract data from an EHR, there has to be consistency in how that data is entered, coded, and structured. That means enforcing structured data entry at the point of care, standardizing PA-relevant fields across your EHR configuration, and mapping clinical workflows to the data elements each payer requires. It’s time-consuming work, and it’s also where most AI readiness efforts stall. Not because organizations don’t intend to do it, but because the staff closest to these workflows are running them full-time, not redesigning them.

Second, be realistic about what FHIR APIs can do today. The DaVinci implementation guides (CRD, DTR, PAS) represent the right long-term direction, and structured data exchange between EHRs and payers is far more reliable than portal navigation. But 43% of payers and 52% of providers haven’t started on the API requirements. The prior auth landscape will remain a hybrid environment for years after the 2027 deadline, with some payers on FHIR and many still operating through portals. Any automation strategy that doesn’t account for that reality will encounter problems in production.

Third, build for auditability from the start. Every prior auth decision carries clinical and compliance liability. An automated system that can’t explain its own reasoning is a compliance problem waiting to happen. The organizations that scale AI successfully invested in data quality, validation, and governance before pursuing autonomous scale. Auditability isn’t a feature you add later. It’s the foundation you build on.

Prior auth needs what Palantir calls an Ontology

Palantir’s most defensible asset isn’t its AI. It’s their Ontology: the structured, continuously maintained model of an organization’s operations, data, and workflows. AI built on a well-maintained operational model works. AI deployed into raw, unstructured environments usually doesn’t.

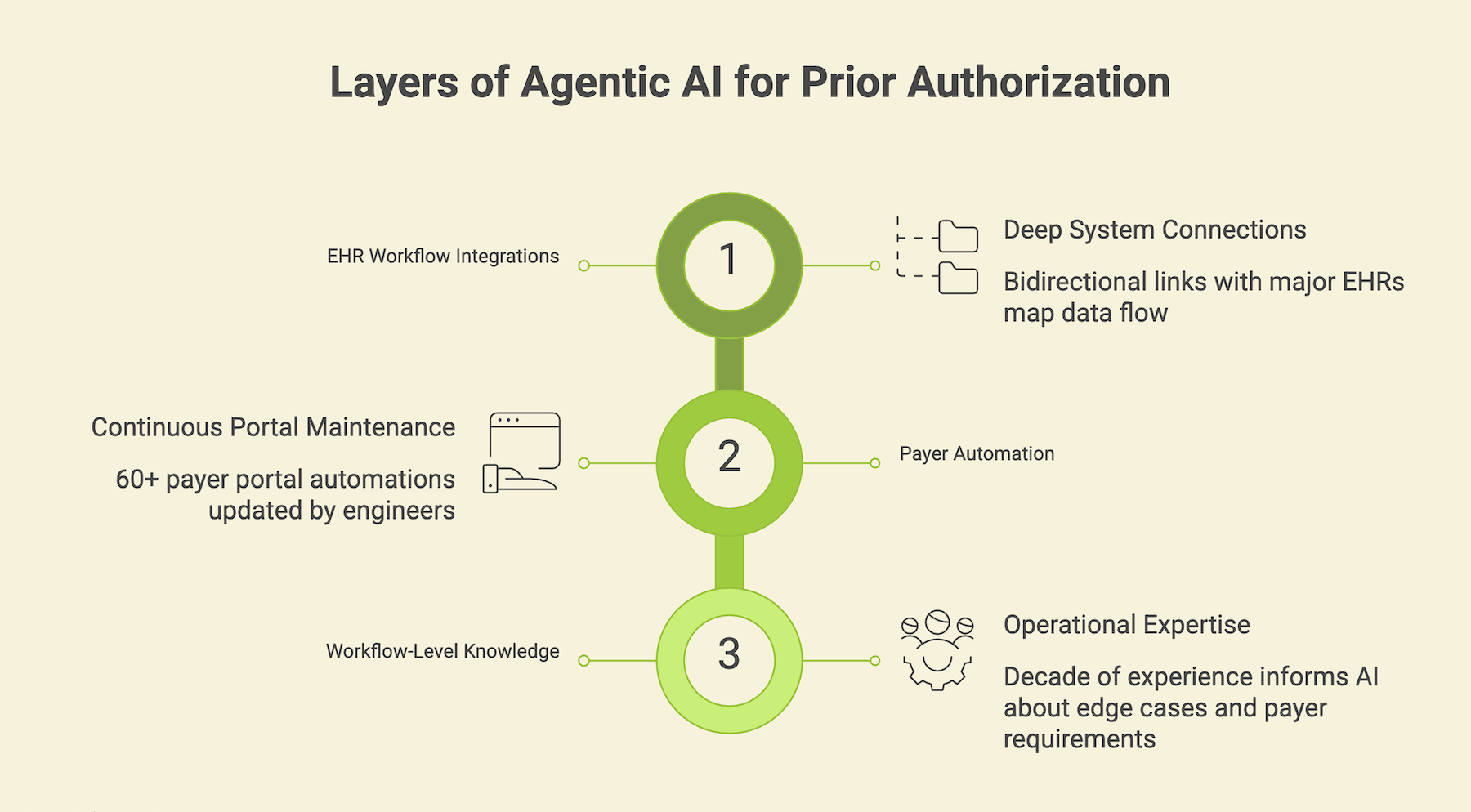

Prior authorization needs the same thing. Not another point solution. Prior auth needs a continuously maintained model of how it actually works: the EHR data flows, the payer behaviors, the edge cases that vary by care setting. That kind of foundation takes years to build, and it’s exactly what we’ve spent the last decade building at Valer.

We’ve developed deep, bidirectional integrations with the major EHR platforms, not surface-level API connections, but workflow-level integrations that map how prior auth data moves through Epic, Oracle Health, athenahealth, and others. On the payer side, we’ve built and maintain more than 60 payer portal automations. Each one is a living asset, updated continuously as portals change and payer rules evolve. That kind of ongoing maintenance isn’t a support function. It’s the product.

When FHIR APIs expand and mature, that EHR integration layer and payer knowledge base become the foundation agentic systems need to act reliably at scale. And when a portal changes or a new payer goes live, our forward-deployed model means the automation stays current without the health system’s IT team needing to touch it.

The hard part isn’t the AI

Agentic AI will transform prior authorization. We genuinely believe that. But the organizations that get there first won’t be the ones who bought the most compelling demo. They’ll be the ones who did the foundational work: mapping their authorization workflows, standardizing their EHR data, and building governance for autonomous decisions before expanding the system’s autonomy.

Prior authorization has been broken for decades. AI can fix it. But the 95% of pilots that fail aren’t failing because of the technology. They’re failing because the foundation wasn’t ready. That’s the real work and it’s the step most implementations skip.

If you’re not sure where your organization stands today, our Provider Readiness Assessment is a good place to start. It takes 10 minutes and tells you exactly where your prior auth workflows need attention before AI can deliver on what it promises.